AURA MACHINE_ Experiment #1 Neural Synthesis PRiSMSampleRNN

AV piece from my musique concrete and machine learning research residency with NOVARS, centre for innovation in sound, University of Manchester in collaboration with PRiSM. The piece premiered at PRiSM FutureMusic3 event, Royal Northern College of Music, June 2020 alongside works from our UNSUPERVISED machine learning for music working group.

About the piece_

‘The genuineness of a thing is the quintessence of everything about it since its creation that can be handed down, from its material duration to the historical witness that it bears.’

The Work of Art in the Age of Mechanical Reproduction, Walter Benjamin

A sound object has an aura.

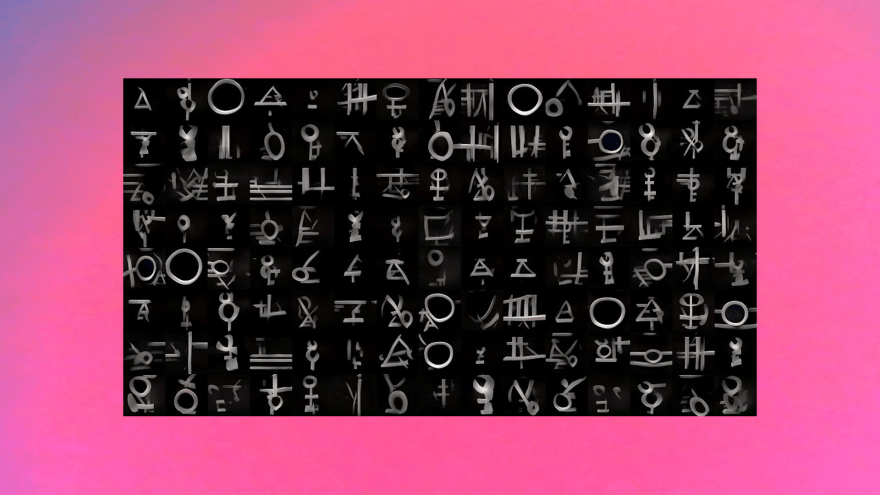

Taking the starting point of the sound object, a sonic fragment or atom of authentic matter, what happens to this materiality when processed by a neural network? What new sonic materials and aesthetics will emerge? Can the AI system project newly distilled hybrid forms or will the process of data compression result in a lo-fi statistical imitation?

For this piece, my first experiment with neural synthesis, I sought to collide the two disciplines of musique concrete and machine learning to take the listener on a journey through the process of training a SampleRNN model.

A tale of two states, AURA MACHINE begins with the training data, the original source material comprising the concrete dataset. Field recordings were categorised into distinct classes; ‘Echoes of Industry’ (Manchester mill spaces), ‘Age of Electricity’ (DIY technology, noise & machinery) and ‘Materiality’ glass fragments and metal sound sculptures. The second state is the purely generated AI output audio.

Can a machine produce an aura?”

AURA MACHINE residency article written for PRiSM, Royal Northern College of Music: READ HERE

AURA MACHINE RESIDENCY BLOG AND RESEARCH SITE HERE.

This project is kindly support by Arts Council England.

One thought on “AURA MACHINE”