The AURA MACHINE research website is now live. The platform collates the research and creative outputs from my artist residency over the past two years with NOVARS, centre for innovation in sound at the University of Manchester, where I have been exploring the collision of musique concrete and machine learning. The residency was supported by European Arts Science Technology Network for Digital Creativity and in collaboration with PRiSM Lab (Practice Research in Science and Music) at the Royal Northern College of Music and was kindly supported by Arts Council England.

Framed by my research question “how can concrete materials and neural networks project future sonic realities?” , the site sets out the critical rationale for exploration, could machine learning, specifically sample based neural synthesis, be a tool for contemporary musique concrete?

Throughout the research I developed skills in building sonic concrete datasets, technical knowledge of neural synthesis and methodologies for listening, composition and performance when working with these materials. The musical output was in the form of my piece AURA MACHINE, whose first iteration was a 10 minute AV piece showcased at FutureMusic#3 event at RNCM.

‘What happens to the sound object when proessed by a neural network?

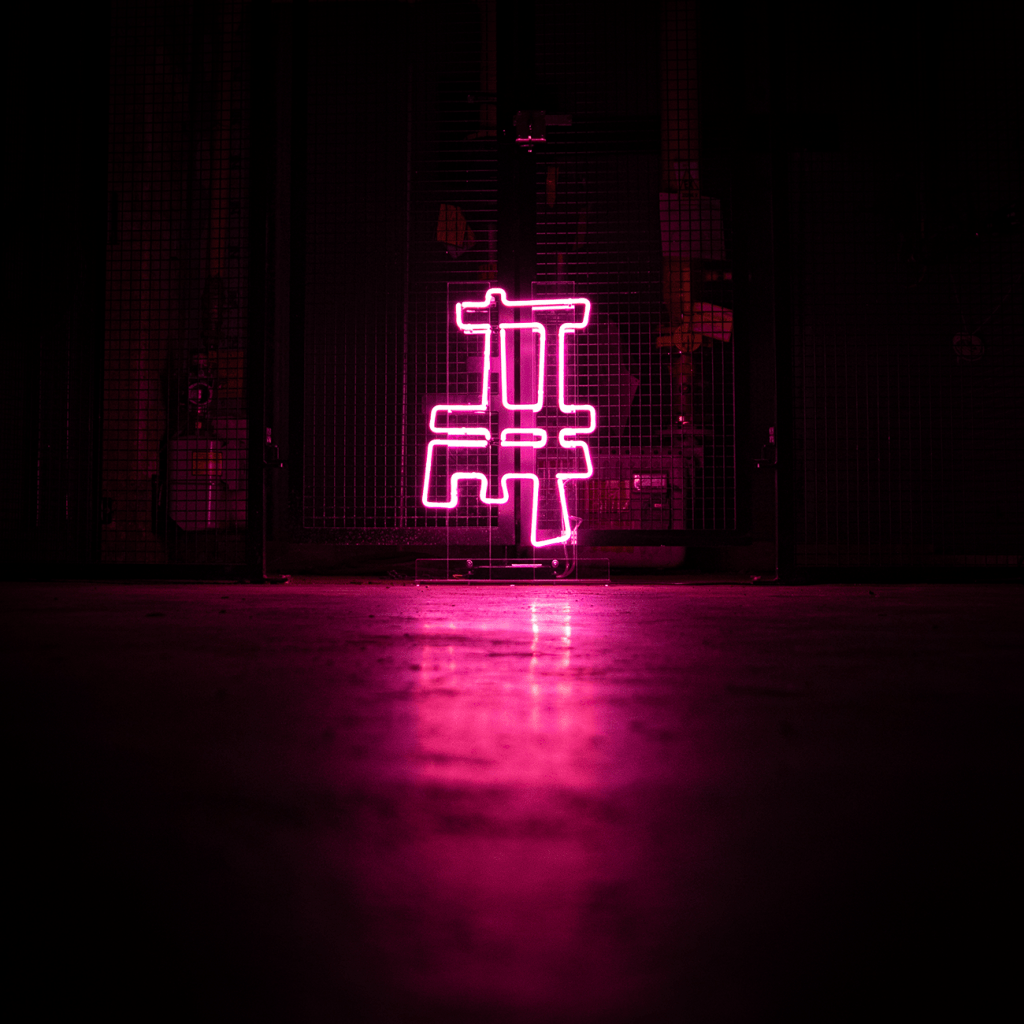

AURA MACHINE

‘A sound object has an AURA, can a machine generate an AURA?

The piece took the listener on a journey through training a neural network, starting with the raw concrete materials (representing the sonic dataset), through to transmutation (model training) to the purely generated AI materials. Conceptually the piece played with both Pierre Schaffer’s idea on the sound objects and Walter Benjamin’s ‘The work of art in the age of mechanical reproduction’ with the idea of a piece of art being authentic to a time and place’. This AV piece was further developed as a live piece into a 20 minute live performance with sound sculpture, audio reactive visuals (collab with Sean Clarke) and performances at Manchester Science Museum and a lead feature for PatchNotes, Factmag.

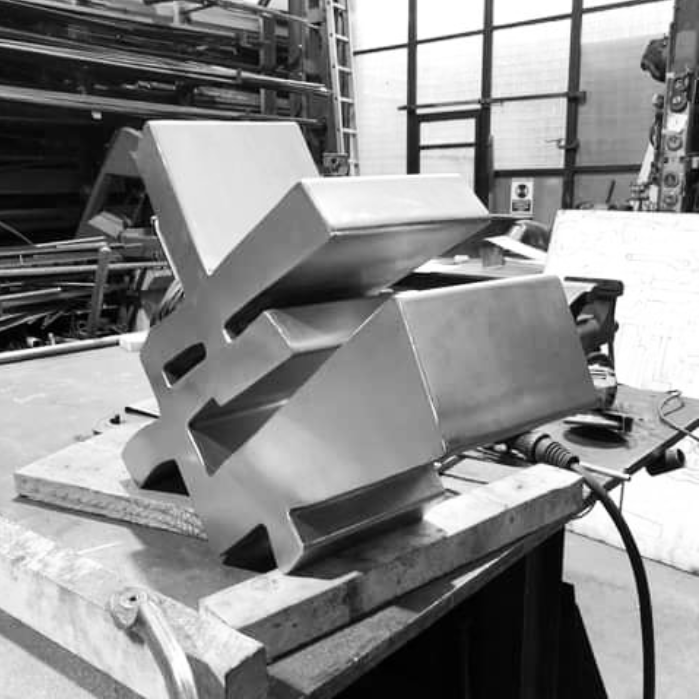

The residency was also supported by Arts Council England, this meant I could also develop new systems for algorithmic sound sculpture and circuit designs combining the creation of an ancient alchemical dataset (I found many parallels between alchemy and modern day ML) for visual generation and contemporary prototyping tools including 3d geometric design with Blender and 3D printed models before professional fabrication.

One aim of the residency was to demystify machine learning, break out of the hidden black box and through examining both the inherent barriers and the current accessible tools, share insights and approaches for other artists to create with sonic AI. I created ‘DREAMING WITH MACHINES’ an educational project for young women with Brighter Sound to support others in using these tools and techniques. We looked at the technical history of AI, inherent bias in datasets, the positive aspects of the turn of creative AI and its potential to imagine future societies and realities. We discussed our relationship with machines and the participants created their own datasets and audio visual pieces which premiered online as ‘dream transmissions’ and were showcased at the Unsupervised#3 event at University of Manchester.